![]() Welcome to the 2nd Monocular Depth Estimation Challenge Workshop organized at

Welcome to the 2nd Monocular Depth Estimation Challenge Workshop organized at ![]()

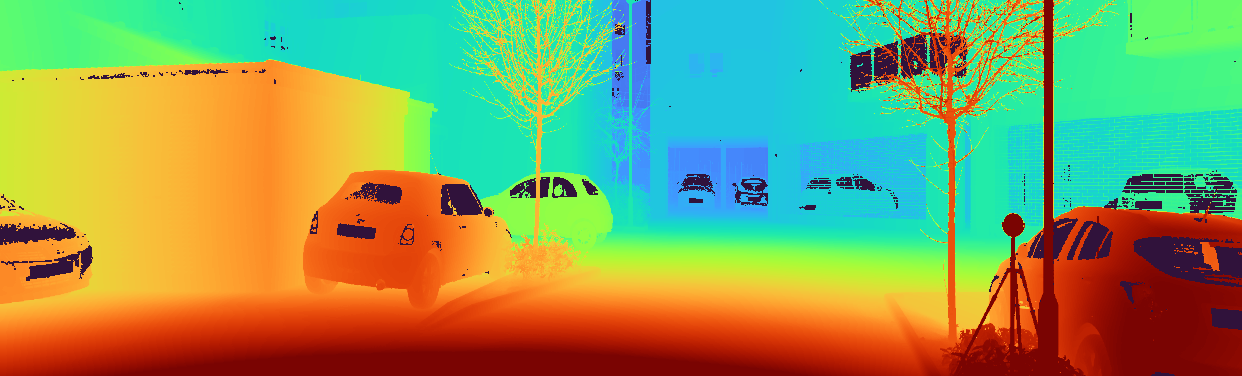

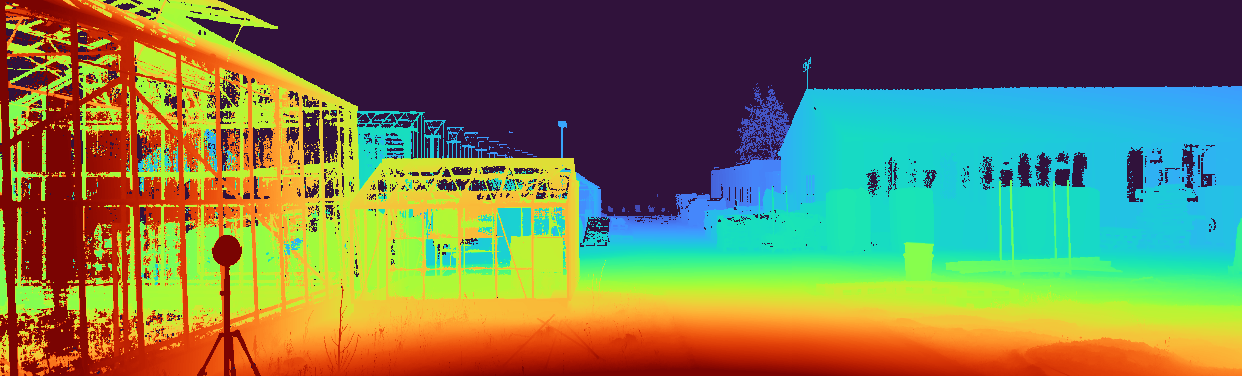

Monocular depth estimation (MDE) is an important low-level vision task, with application in fields such as augmented reality, robotics and autonomous vehicles. Recently, there has been an increased interest in self-supervised systems capable of predicting the 3D scene structure without requiring ground-truth LiDAR training data. Automotive data has accelerated the development of these systems, thanks to the vast quantities of data, the ubiquity of stereo camera rigs and the mostly-static world. However, the evaluation process has also remained focused on only the automotive domain and has been largely unchanged since its inception, relying on simple metrics and sparse LiDAR data.

This workshop seeks to answer the following questions:

- How well do networks generalize beyond their training distribution relative to humans?

- What metrics provide the most insight into the model’s performance? What is the relative weight of simple cues, e.g. height in the image, in networks and humans?

- How do the predictions made by the models differ from how humans perceive depth? Are the failure modes the same?

The workshop will therefore consist of two parts: invited keynote talks discussing current developments in MDE and a challenge organized around a novel benchmarking procedure using the SYNS dataset.

Paper

Paper

Videos

Videos

News

News

-

15 May 2023 —

Tentative workshop schedule released.

Tentative workshop schedule released. -

15 Apr 2023 —

Challenge winners have been announced! Thank you to all participants.

Challenge winners have been announced! Thank you to all participants. -

16 Mar 2023 —

Daniel Cremers confirmed as keynote speaker.

Daniel Cremers confirmed as keynote speaker. -

14 Mar 2023 —

Challenge is now concluded.

Challenge is now concluded. -

30 Jan 2023 —

Challenge dates have been announced!

Challenge dates have been announced! -

16 Jan 2023 —

Alex Kendall confirmed as keynote speaker.

Alex Kendall confirmed as keynote speaker. -

16 Jan 2023 —

Oisin Mac Aodha confirmed as keynote speaker.

Oisin Mac Aodha confirmed as keynote speaker. -

16 Jan 2023 —

Website is live!

Website is live!

Important Dates

Important Dates

- 01 Feb 2023 (00:00 UTC) — Challenge Development Phase Opens (Val)

- 01 Mar 2023 (00:00 UTC) — Challenge Final Phase Opens (Test)

- 14 Mar 2023 (23:59 UTC) — Challenge Submission Closes

- 21 Mar 2023 — Method Description Submission

- 28 Mar 2023 — Invited Talk Notification

- 18 Jun 2023 (08:30AM – 12:00PM PDT) — MDEC Workshop @ CVPR 2023

Schedule

Schedule

The workshop will take place on 18 Jun 2023 from 08:30AM – 12:00PM PDT.

NOTE: Times are shown in Pacific Daylight Time. Please take this into account if joining the workshop virtually.

All presentations (excluding challenge winners) will be in-person and streamed live on Zoom.

| Time (PDT) | Event |

|---|---|

| 08:30 - 08:35 | Introduction |

| 08:35 - 09:15 | Oisin Mac Aodha – Advancing Monocular Depth Estimation |

| 09:15 - 09:40 | Jaime Spencer – The Monocular Depth Estimation Challenge |

| 09:40 - 09:55 | Linh Trinh – Challenge Winner (Self-Supervised) |

| 09:55 - 10:10 | Wei Yin – Challenge Winner (Supervised) |

| 10:10 - 10:40 | Break |

| 10:40 - 11:20 |

Daniel Cremers – From Monocular Depth Estimation to 3D Scene Reconstruction |

| 11:20 - 12:00 | Alex Kendall – Building the Foundation Model for Embodied AI |

Keynote Speakers

Keynote Speakers

Oisin Mac Aodha

Assistant Professor

University of Edinburgh

Daniel Cremers

Professor

Technical University of Munich

Alex Kendall

CEO

Wayve

Oisin Mac Aodha is a Lecturer in Machine Learning in the School of Informatics at the University of Edinburgh. From 2016-2019, he was a postdoc in Prof. Pietro Perona’s Computational Vision Lab at Caltech. Prior to that, he was a postdoc in the Department of Computer Science at University College of London (UCL) with Prof. Gabriel Brostow and Prof. Kate Jones. He received his PhD from UCL in 2014, advised by Prof. Gabriel Brostow, and has an MSc in Machine Learning from UCL an BEng in electronic and computing engineering from the University of Galway. Along with being a Fellow of the Alan Turing Institute and a European Laboratory for Learning and Intelligent Systems (ELLIS) Scholar. His current research interests are in the areas of computer vision and machine learning, with a specific emphasis on shape and depth estimation, human-in-the-loop learning, and fine-grained image understanding.

Daniel Cremers

holds the Chair of Computer Vision and Artificial Intelligence at TU Munich.

He has coauthored over 500 publications in computer vision, machine learning, robotics and applied mathematics, many of which received awards.

He was listed among Germany’s top 40 researchers below 40 (Capital 2010), he received the Gottfried Wilhelm Leibniz Award 2016, the biggest award in German academia, and he is member of the Bavarian Academy of Sciences and Humanities.

He is initiator and co-director of the Munich Data Science Institute, the Munich Center for Machine Learning and ELLIS Munich.

He has served as founder, advisor and investor to numerous startups.

Alex Kendall

is the co-founder and CEO Wayve, a London head-quartered startup reimagining self-driving with embodied artificial intelligence.

Widely recognised as a world expert in this field, Alex’s leadership has led Wayve to become one of the most exciting startups in the burgeoning AV industry.

Alex was awarded his PhD at the University of Cambridge, where he studied as a Woolf Fisher Scholar.

Following his highly-cited research, he was elected a Research Fellow at Trinity College, University of Cambridge.

Alex was awarded the 2018 BMVA Prize, 2019 ELLIS European PhD Prize and was named on the 2020 Forbes 30 Under 30 list for contributions to technology entrepreneurship.

Challenge Winners

Challenge Winners

Congratulations to the challenge winners!

- Supervised: DJI&ZJU

- Self-Supervised: imec-IDLab-UAntwerp

| F | F (Edges) |

MEA | RMSE | Rel | Acc (Edges) |

Comp (Edges) |

||

|---|---|---|---|---|---|---|---|---|

| DJI&ZJU | D | 17.51 | 8.80 | 4.52 | 8.72 | 24.32 | 3.22 | 21.65 |

| Pokemon | D | 16.94 | 9.63 | 4.71 | 8.00 | 25.35 | 3.56 | 19.95 |

| cv-challenge | D | 16.70 | 9.36 | 4.91 | 8.63 | 24.33 | 3.02 | 18.07 |

| imec-IDLab- UAntwerp |

MS | 16.00 | 8.49 | 5.08 | 8.96 | 28.46 | 3.74 | 11.32 |

| GMD | MS | 14.71 | 8.13 | 5.17 | 8.97 | 29.43 | 3.75 | 17.29 |

| Baseline | S | 13.72 | 7.76 | 5.56 | 9.72 | 32.04 | 3.97 | 21.63 |

| DepthSquad | D | 12.77 | 7.68 | 5.17 | 8.83 | 29.92 | 3.56 | 35.26 |

| MonoViTeam | MSD* | 12.44 | 7.49 | 5.05 | 8.59 | 28.99 | 3.10 | 38.93 |

| USTC-IAT- United |

MS | 11.29 | 7.18 | 5.81 | 9.58 | 32.82 | 3.47 | 43.38 |

Teams

- DJI&ZJU: Wei Yin, Kai Cheng, Guangkai Xu, Hao Chen, Bo Li, Kaixuan Wang, Xiaozhi Chen

- Pokemon: Mochu Xiang, Jiahui Ren, Yufei Wang, Yuchao Dai

- cv-challenge: Chao Li, Qi Zhang, Zhiwen Liu, Yixing Wang

- DepthSquad: Myungwoo Nam, Huynh Thai Hoa, Khan Muhammad Umair, Sadat Hossain, S. M. Nadim Uddin

- imec-IDLab-UAntwerp: Linh Trinh, Ali Anwar, Siegfried Mercelis

- GMD: Baojun Li, Jianmian Huang

- MonoViTeam: Chaoqiang Zhao, Matteo Poggi, Fabio Tosi, Yang Tang, Stefano Mattoccia

- USTC-IAT-United: Jun Yu, Mohan Jing, Xiaohua Qi

Challenge

Challenge

Teams submitting to the challenge will also be required to submit a description of their method. As part of the CVPR Workshop Proceedings, we will publish a paper summarizing the results of the challenge, including a description of each method. All challenge participants surpassing the performance of the Garg baseline (by jspenmar) will be added as authors in this paper. Top performers will additionally be invited to present their method at the workshop. This presentation can be either in-person or virtually.

IMPORTANT: We have decided to expand this edition of the challenge beyond self-supervised models. This means we are accepting any monocular method, e.g. supervised, weakly-supervised, multi-task… The only restriction is that the model cannot be trained on any portion of the SYNS(-Patches) dataset and must make the final depth map prediction using only a single image.

[GitHub] — [Challenge] — [Paper]

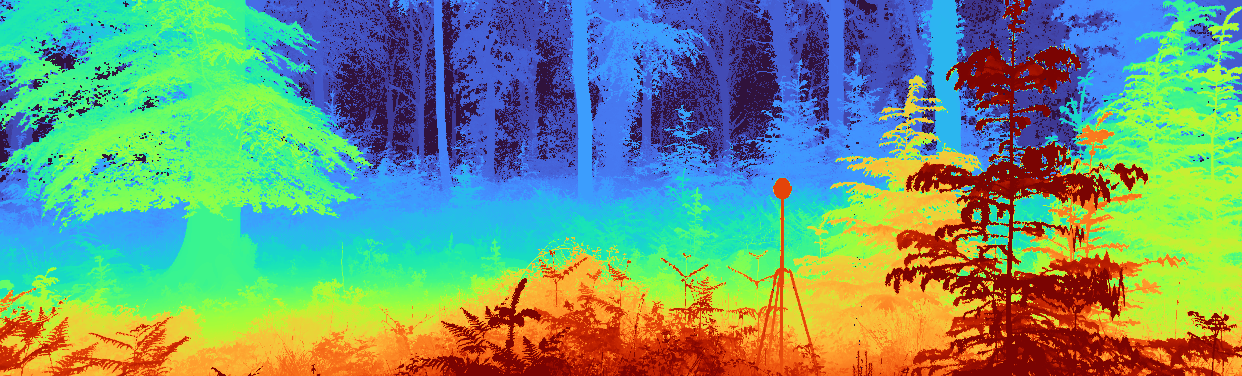

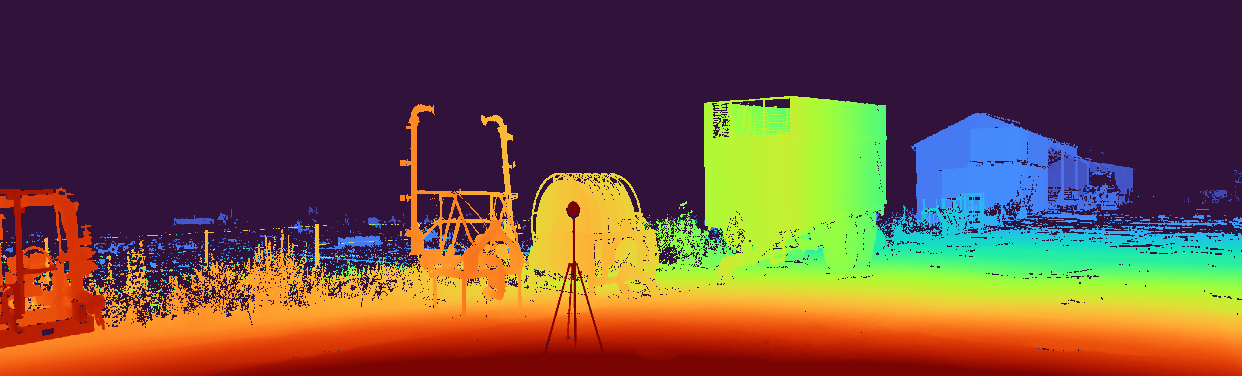

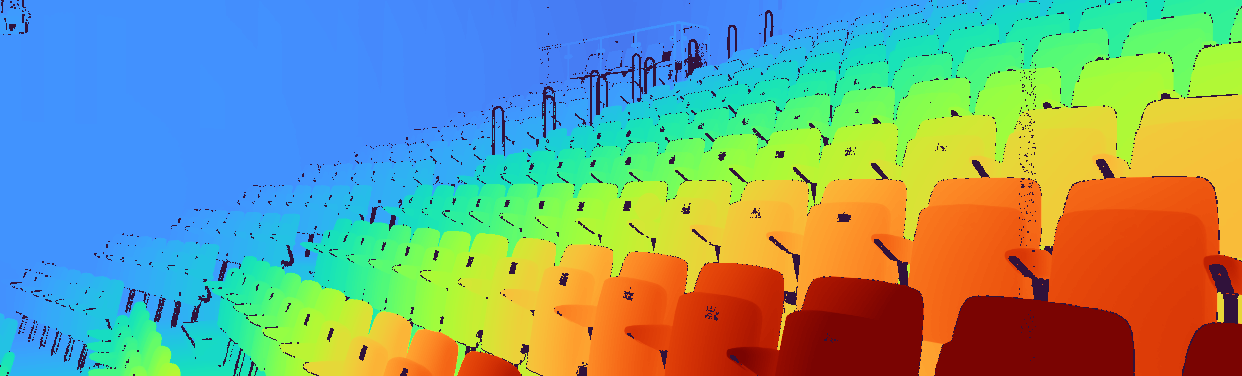

The challenge focuses on evaluating novel MDE techniques on the SYNS-Patches dataset proposed in this benchmark. This dataset provides a challenging variety of urban and natural scenes, including forests, agricultural settings, residential streets, industrial estates, lecture theatres, offices and more. Furthermore, the high-quality dense ground-truth LiDAR allows for the computation of more informative evaluation metrics, such as those focused on depth discontinuities.

The challenge is hosted on CodaLab. We have provided a GitHub repository containing training and evaluation code for multiple recent SotA approaches to MDE. These will serve as a competitive baseline for the challenge and as a starting point for participants. The challenge leaderboards use the withheld validation and test sets for SYNS-Patches. We additionally encourage evaluation on the public Kitti Eigen-Benchmark dataset.

Submissions will be evaluated on a variety of metrics:

- Pointcloud reconstruction: F-Score

- Image-based depth: MAE, RMSE, AbsRel

- Depth discontinuities: F-Score, Accuracy, Completeness

Challenge winners will be determined based on the pointcloud-based F-Score performance.

Organizers

Organizers

Jaime Spencer

Research Fellow

University of Surrey

Stella Qian

Research Fellow

Aston University

Chris Russell

Senior Applied Scientist

Amazon

Simon Hadfield

Senior Lecturer

University of Surrey

Erich Graf

Associate Professor

University of Southampton

James Elder

Professor

York University

Andrew Schofield

Professor

Aston University

Richard Bowden

Professor

University of Surrey