![]() Welcome to the 4th Monocular Depth Estimation Challenge Workshop organized at

Welcome to the 4th Monocular Depth Estimation Challenge Workshop organized at ![]()

Join us on 12 June 2025 from 14:00 – 18:00 CDT over Zoom from HERE

Monocular depth estimation (MDE) is an important low-level vision task with applications in fields such as augmented reality, robotics, and autonomous vehicles. In 2024, the field was dominated by generative approaches, with DepthAnything representing the transformer-based solution and Marigold being a denoising diffusion model based on the popular Text-to-Image LDM Stable Diffusion. Even before that, there has been an increased interest in self-supervised systems capable of predicting the 3D scene structure without requiring ground-truth LiDAR training data. The automotive industry accelerated the development of these systems thanks to the vast quantities of data and the ubiquity of stereo camera rigs. However, the evaluation process has remained focused on in-domain evaluation, relying on simple metrics and sparse LiDAR data.

This workshop seeks to answer the following questions:

- How well do networks generalize beyond their training distribution relative to humans?

- What metrics provide the most insight into the model’s performance?

- How do the predictions made by the models differ from how humans perceive depth?

The workshop will consist of two parts: invited keynote talks discussing current developments in MDE and a challenge organized around a benchmarking procedure using the SYNS dataset.

Paper

Paper

Video

Video

Coming soon…

News

News

-

30 May 2025 —

MDEC schedule now available!

MDEC schedule now available! -

24 Apr 2025 —

Challenge paper out on arXiv!

Challenge paper out on arXiv! - 01 Feb 2025 — 🏆 Submissions to the challenge are now open!

-

30 Jan 2025 —

Konrad Schindler confirmed as keynote speaker.

Konrad Schindler confirmed as keynote speaker. -

07 Jan 2025 —

Yiyi Liao confirmed as keynote speaker.

Yiyi Liao confirmed as keynote speaker. -

06 Jan 2025 —

Peter Wonka confirmed as keynote speaker.

Peter Wonka confirmed as keynote speaker. -

05 Jan 2025 —

Website is live!

Website is live!

Important Dates

Important Dates

- 01 Feb 2025 (00:00 UTC) — Challenge Development Phase Opens (Val)

- 01 Mar 2025 (00:00 UTC) — Challenge Final Phase Opens (Test)

- 21 Mar 2025 (23:59 UTC) — Challenge Submission Closes

- 01 Apr 2025 — Method Description Submission

- 7 May 2025 — Invited Talk Notification

- 12 Jun 2025 — MDEC Workshop @ CVPR 2025

Schedule

Schedule

The workshop will take place on 12 June 2025 from 14:00 – 18:00 CDT. You can join remotely over Zoom from HERE

NOTE: Times are shown in Central Daylight Time. Please take this into account if you plan to join the workshop virtually.

| Time (PDT) | Event |

|---|---|

| 14:00 - 14:10 | Introduction |

| 14:10 - 14:50 | Konrad Schindler |

| 14:50 - 15:30 | Peter Wonka |

| 15:30 - 16:00 | Break |

| 16:00 - 16:20 | Matteo Poggi – The Monocular Depth Estimation Challenge |

| 16:20 - 16:50 | Shuaihang Wang – HRI (Challenge Participant) |

| Mykola Lavreniuk – Lavreniuk (Challenge Participant) | |

| Zihan Qin – HIT-AIIA (Challenge Participant) | |

| 16:50 - 17:30 | Yiyi Liao |

| 17:30 - 17:40 | Closing Notes |

Keynote Speakers

Keynote Speakers

Peter Wonka

Full Professor

KAUST

Yiyi Liao

Assistant Professor

Zhejiang University

Konrad Schindler

Full Professor

ETH Zurich

Peter Wonka is a full professor of computer science at King Abdullah University of Science and Technology (KAUST). Peter Wonka received his doctorate in computer science from the Technical University of Vienna. Additionally, he received a Master of Science in Urban Planning from the same institution. After his PhD, Dr. Wonka worked as a postdoctoral researcher at the Georgia Institute of Technology and as faculty at Arizona State University. His research publications tackle various computer vision, computer graphics, and machine learning topics. The current research focuses on deep learning, generative models, and 3D shape analysis and reconstruction.

Yiyi Liao is an assistant professor at Zhejiang University. Prior to that, she received her Ph.D. degree from Zhejiang University and subsequently worked as a Postdoc at MPI for Intelligent Systems. Her research interest lies in 3D computer vision and immersive media, including reconstruction, generation, and compression. She received the Best Robot Vision Paper award at ICRA 2024. She serves as a program chair for 3DV 2025 and an area chair for CVPR and NeurIPS.

Konrad Schindler received the Diplomingenieur (M.Tech.) degree in photogrammetry from the Vienna University of Technology, Vienna, Austria, in 1999 and the Ph.D. degree from the Graz University of Technology, Graz, Austria, in 2003. He was a Photogrammetric Engineer in the private industry and held researcher positions at the Computer Graphics and Vision Department, Graz University of Technology, the Digital Perception Laboratory, Monash University, Melbourne, VIC, Australia, and the Computer Vision Laboratory, ETH Zürich, Zürich, Switzerland. He was an Assistant Professor of Image Understanding with TU Darmstadt, Darmstadt, Germany, in 2009. Since 2010, he has been a Tenured Professor of Photogrammetry and Remote Sensing with ETH Zürich. His research interests include computer vision, photogrammetry, and remote sensing, with a focus on image understanding and information extraction reconstruction. Dr. Schindler has been serving as an Associate Editor of the Journal of Photogrammetry and Remote Sensing of the International Society for Photogrammetry and Remote Sensing (ISPRS) since 2011, and previously served as an Associate Editor of the Image and Vision Computing Journal from 2011 to 2016. He was the TC President of the ISPRS from 2012 to 2016.

Challenge Winners

Challenge Winners

Congratulations to the challenge winners – HRI!

| F (↑) | F (↑) (Edges) |

MAE (↓) | RMSE (↓) | AbsRel (↓) | Acc (↑) (Edges) |

Comp (↓) (Edges) |

δ<1.25 (↑) | δ<1.25^2 (↑) | δ<1.25^3 (↑) | ||

|---|---|---|---|---|---|---|---|---|---|---|---|

| HRI | A | 23.05 | 9.37 | 3.19 | 5.64 | 21.17 | 3.30 | 6.77 | 77.54 | 91.79 | 95.78 |

| Anonymous | 22.95 | 9.21 | 3.44 | 6.48 | 24.65 | 3.27 | 6.59 | 76.17 | 90.33 | 94.73 | |

| PICO-MR | 22.58 | 8.26 | 3.41 | 5.89 | 22.02 | 4.02 | 11.28 | 76.18 | 90.95 | 95.44 | |

| Lavreniuk | A | 20.81 | 9.12 | 3.40 | 5.77 | 20.48 | 3.20 | 7.27 | 75.33 | 91.33 | 95.97 |

| Mach-Calib | A | 20.69 | 8.75 | 3.63 | 6.78 | 23.36 | 3.22 | 13.96 | 74.31 | 90.44 | 95.12 |

| Anonymous | 20.50 | 8.63 | 3.68 | 7.07 | 23.96 | 3.17 | 13.66 | 74.00 | 90.32 | 95.01 | |

| EasyMono | A | 20.24 | 7.30 | 3.43 | 5.91 | 22.47 | 3.91 | 20.98 | 74.73 | 90.55 | 95.24 |

| Insta360-Percep | A | 19.41 | 8.98 | 3.25 | 5.73 | 21.29 | 2.75 | 15.54 | 77.91 | 92.03 | 95.91 |

| Anonymous | 18.85 | 8.27 | 4.06 | 7.06 | 26.35 | 3.11 | 13.75 | 67.57 | 87.98 | 94.18 | |

| HIT-AIIA | A | 18.53 | 9.74 | 3.83 | 6.29 | 23.96 | 3.06 | 9.90 | 69.12 | 88.49 | 94.31 |

| Anonymous | 18.16 | 6.32 | 3.57 | 6.13 | 24.07 | 4.51 | 15.75 | 71.62 | 89.12 | 94.74 | |

| Anonymous | 17.46 | 9.35 | 4.43 | 7.30 | 29.19 | 3.27 | 17.69 | 65.64 | 86.45 | 93.09 | |

| Anonymous | 17.46 | 9.35 | 4.43 | 7.30 | 29.19 | 3.27 | 17.69 | 65.64 | 86.45 | 93.09 | |

| Anonymous | 17.45 | 9.35 | 4.43 | 7.30 | 29.20 | 3.27 | 17.69 | 65.64 | 86.46 | 93.10 | |

| Robot02-vRobotit | A | 17.25 | 8.27 | 3.90 | 6.54 | 23.64 | 3.07 | 22.85 | 71.07 | 89.38 | 94.80 |

| Anonymous | 17.01 | 9.20 | 4.44 | 7.34 | 29.42 | 3.25 | 18.55 | 65.72 | 86.67 | 93.35 | |

| Anonymous | 17.01 | 9.20 | 4.44 | 7.34 | 29.42 | 3.25 | 18.55 | 65.72 | 86.67 | 93.35 | |

| Marigold | A | 17.01 | 9.19 | 4.44 | 7.34 | 29.42 | 3.25 | 18.56 | 65.72 | 86.67 | 93.35 |

| Anonymous | 16.79 | 9.10 | 4.51 | 7.44 | 30.10 | 3.32 | 17.65 | 65.24 | 86.22 | 93.05 | |

| Anonymous | 16.67 | 7.49 | 4.01 | 6.92 | 26.34 | 3.07 | 26.32 | 68.17 | 88.47 | 94.42 | |

| Anonymous | 16.47 | 8.99 | 4.74 | 7.71 | 30.93 | 3.26 | 18.75 | 62.26 | 84.27 | 91.90 | |

| ViGIR LAB | 16.25 | 8.54 | 5.37 | 9.00 | 43.72 | 3.29 | 14.15 | 61.99 | 82.89 | 90.46 | |

| Anonymous | A | 15.31 | 8.46 | 4.62 | 7.46 | 30.63 | 3.42 | 15.59 | 62.41 | 84.89 | 92.43 |

| Anonymous | 15.03 | 7.64 | 5.48 | 9.29 | 38.59 | 3.70 | 20.33 | 58.82 | 81.79 | 90.85 | |

| Depth Anything v2 | 14.34 | 6.72 | 4.84 | 9.13 | 33.57 | 2.99 | 35.54 | 66.34 | 86.02 | 92.44 | |

| HCMUS-DepthFusion | A | 14.20 | 7.81 | 4.90 | 7.96 | 33.55 | 3.32 | 17.66 | 61.09 | 83.90 | 92.21 |

| ReadingLS | 13.52 | 6.60 | 4.56 | 7.90 | 30.15 | 3.14 | 35.94 | 65.34 | 86.55 | 93.46 |

Legend: Baselines; 3rd MDEC winning team; A – affine-invariant predictions; for each metric we highlight absolute best, second best and third best

Challenge

Challenge

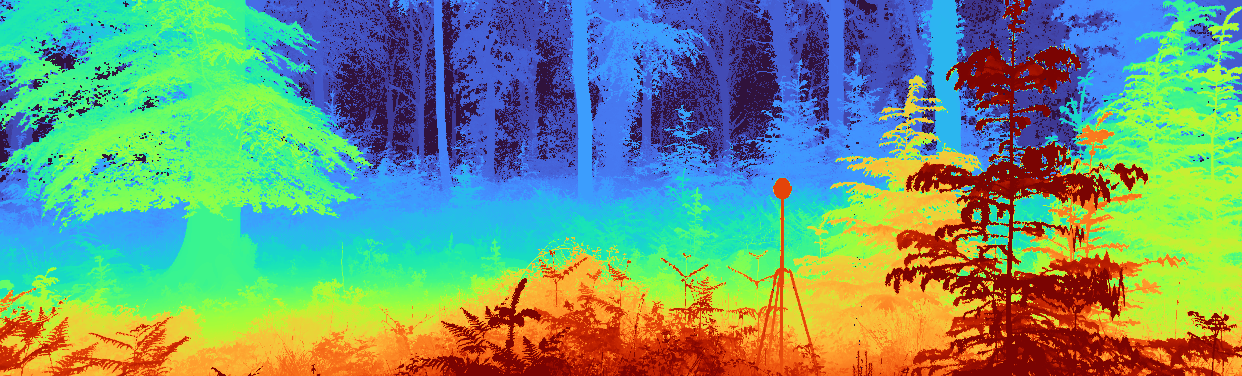

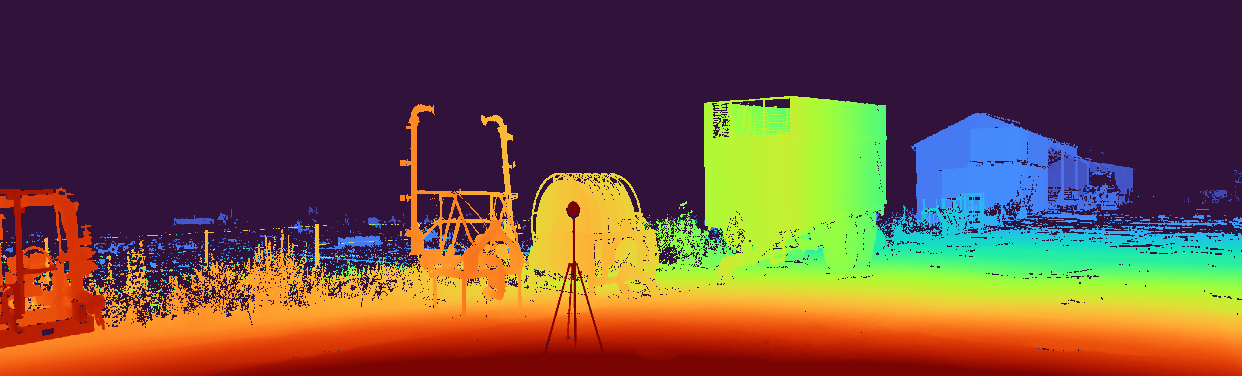

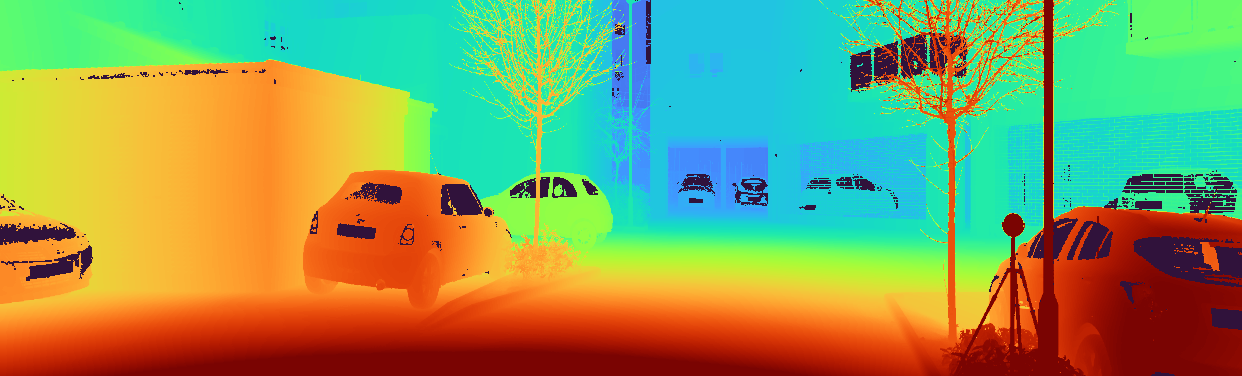

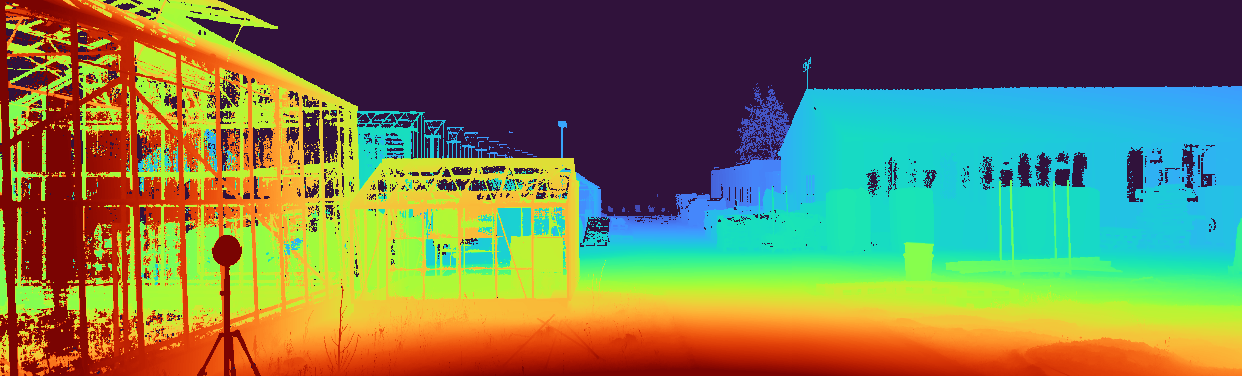

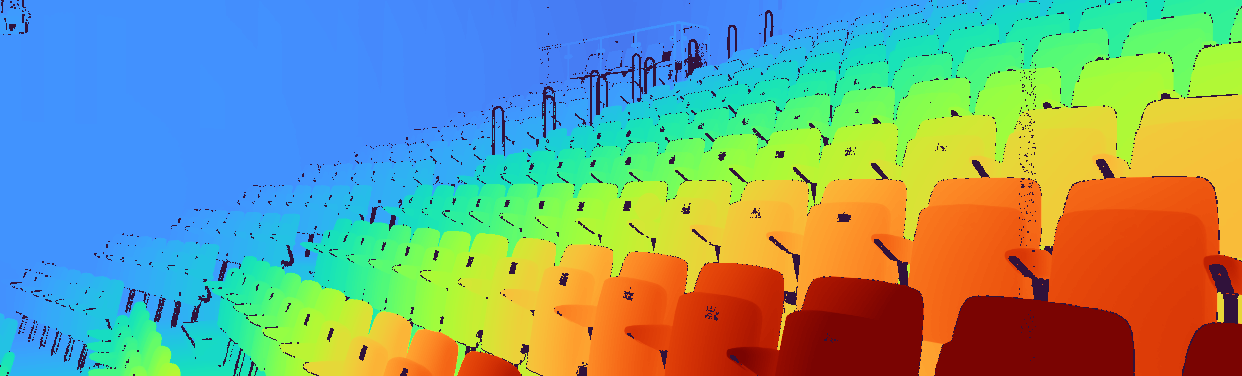

The challenge focuses on evaluating novel MDE techniques on the SYNS-Patches dataset. This dataset provides a challenging variety of urban and natural scenes, including forests, agricultural settings, residential streets, industrial estates, lecture theatres, offices, and more. Furthermore, the high-quality, dense ground-truth LiDAR allows for the computation of more informative evaluation metrics, such as those focused on depth discontinuities.

[GitHub Starter Pack] — [CodaLab Challenge]

⚡ What’s new in MDEC 2025?

- 📐 New prediction types: The challenge became more accessible thanks to the added support of

affine-invariantpredictions.metricandscale-invariantpredictions are also automatically supported.disparitypredictions, which were supported in previous challenges, are also accepted. - 🤗 Pre-trained Model Support: We provide ready-to-use scripts for off-the-shelf methods: Depth Anything V2 (

disparity) and Marigold (affine-invariant). These will serve as a competitive baseline for the challenge and a starting point for participants. - 📊 Updated Evaluation Pipeline: The CodaLab grader code has been updated to accommodate the newly supported prediction types.

🚀 How to participate?

- Check out the new starter pack GitHub. The mdec_2025 folder contains scripts generating valid submissions for Marigold (

affine-invariant) and Depth Anything v2 (disparity). - Identify the prediction type of your method and generate a valid submission:

valsplit for the “Development” phase andtestsplit for the “Final” phase. - Register at the CodaLab Challenge site, check the submission constraints and extra conditions, and submit to the leaderboard.

The phases are open according to the following schedule:

- “Development”: Feb 01 - Mar 01

- “Final”: Mar 01 - Mar 21

📊 Evaluation

Submissions will be evaluated on a variety of metrics:

- Pointcloud reconstruction: F-Score

- Image-based depth: MAE, RMSE, AbsRel

- Depth discontinuities: F-Score, Accuracy, Completeness

The leading metric is F-Score (based on the point cloud), denoted as F (↑) in the leaderboard. Challenge winners will be determined based on the performance ranked by the leading metric on the withheld validation (“Development” phase) and the test (“Final” phase) sets of the SYNS-Patches dataset.

To measure the performance locally with other datasets or troubleshoot scoring issues within the challenge, refer to the evaluation code.

📈 Baselines

This year, we switched to LSE-based alignment between predictions and ground truth maps to accept various types of predictions.

In addition to previously accepted disparity prediction methods, we welcome affine-invariant, scale-invariant, and metric types.

Accordingly, we updated the benchmark with more recent baselines, such as Marigold (affine-invariant), Depth Anything v2 (disparity), and the winners of the 3rd edition of the MDEC challenge, whose performances are reported below.

| F (↑) | F (↑) (Edges) |

MAE (↓) | RMSE (↓) | AbsRel (↓) | Acc (↑) (Edges) |

Comp (↓) (Edges) |

δ<1.25 (↑) | δ<1.25^2 (↑) | δ<1.25^3 (↑) | |

|---|---|---|---|---|---|---|---|---|---|---|

| PICO-MR | 21.07 | 8.77 | 3.22 | 5.60 | 20.33 | 3.69 | 15.41 | 0.7559 | 0.9125 | 0.9590 |

| EVP++ | 19.66 | 9.02 | 3.20 | 5.49 | 19.03 | 2.66 | 9.28 | 0.7553 | 0.9182 | 0.9661 |

| Marigold | 18.64 | 9.26 | 3.87 | 6.49 | 24.37 | 2.90 | 20.09 | 0.6903 | 0.8860 | 0.9453 |

| Depth Anything v2 | 14.34 | 7.94 | 4.16 | 7.94 | 25.48 | 2.64 | 30.05 | 0.6907 | 0.8849 | 0.9469 |

| Garg’s Baseline | 11.38 | 6.03 | 4.62 | 7.58 | 31.15 | 4.01 | 41.24 | 0.5842 | 0.8354 | 0.9251 |

📚 Workshop proceedings

As part of the CVPR Workshop Proceedings, we will publish a paper summarizing the results of the challenge. The following conditions must be met to have the method included in the paper:

- The method surpasses the performance of the baselines in the leading metric (F-Score);

- The method should not be trivial;

- Each prediction is made using a single corresponding input image;

Once the challenge has finished, we will contact the participants meeting the criteria above to request information about their affiliation, a short description of their method, and the method’s source code. Participants not providing this information will not be added to the publication; their submission will stay anonymous in the leaderboard.

Selected top performers will also be invited to present their methods at the workshop. The presentation can be held either in person or virtually. This is mandatory; refusal to do so will result in an invalidated submission and removal from the paper.

Feedback

Please feel free to reach out with any questions, concerns, or feedback using the address below — this is the quickest way to contact us. If your topic relates to the challenge, in addition to emailing us, consider opening a discussion on the CodaLab forum.

🤵 Organizers

Anton Obukhov

Principal Research Scientist

Huawei Research Center Zürich

Ripudaman Singh Arora

Principal ML Researcher

Blue River Technology

Jaime Spencer

Data Engineer

Oxa

Fabio Tosi

Junior Assistant Professor

University of Bologna

Matteo Poggi

Tenure-Track Assistant Professor

University of Bologna

Chris Russell

Associate Professor

Oxford Internet Institute

Simon Hadfield

Associate Professor

University of Surrey

Richard Bowden

Professor

University of Surrey